It didn’t sound like a radical statement at first. Just a quiet admission, almost matter-of-fact:

“We don’t trust AI.”

But coming from the Tech Lead at the Open Knowledge Foundation (OKFN), it landed differently.

Not as fear. Not as resistance.

But as a signal—something in the system isn’t ready yet.

A Moment Inside an Open Data Portal

Imagine this.

A journalist logs into an open data portal looking for public spending records. Instead of digging through raw datasets, she uses a shiny new AI assistant powered by a Large Language Model (LLM).

She types:

“Show me unusual spikes in healthcare spending last year.”

The AI responds instantly. Clean summary. Confident tone. Clear conclusion.

But here’s the problem:

She has no idea how the AI got there.

- Did it miss edge cases?

- Did it hallucinate trends?

- Did it bias certain regions over others?

And most importantly:

Can she trust it enough to publish?

This is where the tension lives.

The Promise vs. The Reality

LLMs feel like magic in open data ecosystems. They lower barriers, translate complexity, and unlock insights for non-technical users.

But under the surface, things get messy.

| What AI Promises | What Actually Happens (Today) |

|---|---|

| Instant insights | Sometimes plausible guesses |

| Natural language access | Hidden assumptions |

| Democratized data use | Uneven understanding |

| Scalable analysis | Hard-to-trace reasoning |

The gap between appearance and reliability is exactly why trust is fragile.

Why Open Data Makes This Harder (Not Easier)

Open data sounds like the perfect match for AI: transparent, accessible, public.

Ironically, it makes the risks sharper.

Because open data is often:

- Messy – incomplete, inconsistent, outdated

- Context-heavy – requires domain knowledge to interpret

- Politically sensitive – tied to real-world decisions and accountability

Now layer AI on top—a system that:

- Generates answers even when uncertain

- Struggles to explain its reasoning clearly

- Can amplify hidden biases in datasets

You don’t just get smarter access.

You get faster ways to be wrong.

The Real Issue: Not Capability—But Accountability

Most discussions frame this as a technical challenge.

It’s not.

It’s a responsibility problem.

When an AI system inside an open data portal produces an answer:

- Who is accountable for errors?

- How do users verify outputs?

- Where does the “source of truth” live?

Without clear answers, trust doesn’t scale—no matter how good the model gets.

A More Human Way to Think About AI Trust

Think of LLMs not as analysts, but as interns.

Very fast interns. Very confident interns.

But still interns.

You wouldn’t publish their work without review.

You wouldn’t let them operate without supervision.

You wouldn’t assume they understand nuance perfectly.

That mental model alone can prevent a lot of misuse.

What This Means for Builders (In Practice)

If you’re building with AI—especially in data-heavy environments—this moment is an opportunity, not a setback.

The winners won’t be the ones with the smartest models.

They’ll be the ones who design for trust.

1. Show Your Work (Literally)

Don’t just give answers. Show:

- Data sources

- Query paths

- Confidence levels

Actionable tip:

Add a “Why am I seeing this?” button to every AI-generated result.

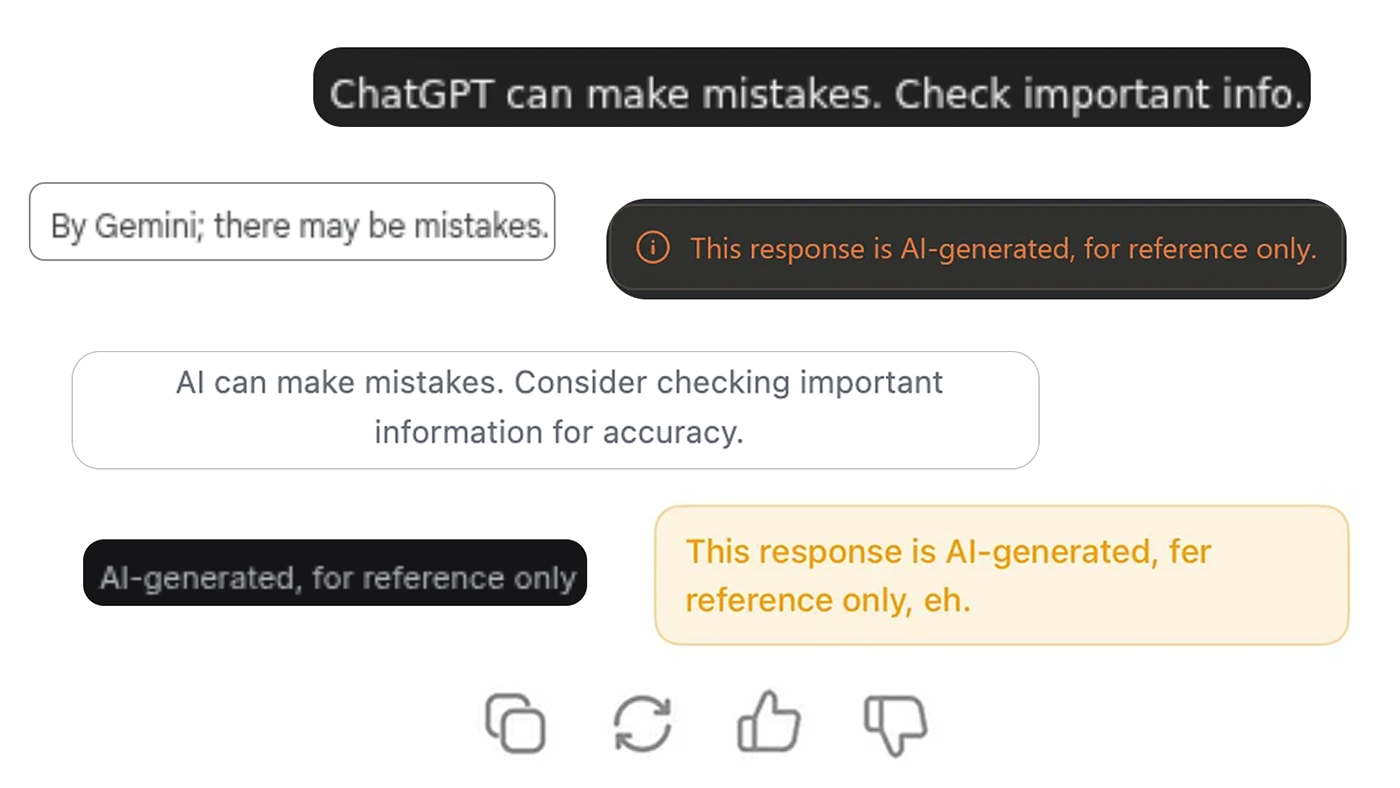

2. Design for Doubt, Not Blind Confidence

Most AI interfaces are optimized for smoothness.

Instead, introduce friction where it matters:

- Highlight uncertainty

- Flag assumptions

- Offer alternative interpretations

Real-world example:

Instead of saying:

“Healthcare spending increased due to policy changes”

Say:

“Based on available data, spending may have increased. This could be influenced by X and Y factors.”

3. Keep Humans in the Loop (Strategically)

Full automation sounds efficient. It’s often dangerous.

Better approach:

- AI suggests → Human verifies → System learns

Especially in journalism, policy, and research contexts.

4. Make Bias Visible, Not Hidden

Bias isn’t avoidable—it’s manageable.

- Show dataset limitations

- Indicate missing regions or demographics

- Let users explore raw data alongside AI summaries

5. Treat Transparency as a Feature (Not Compliance)

Don’t hide complexity.

Turn it into a product advantage.

Users want to trust you—they just need reasons to.

The Bigger Shift Happening

What OKFN’s Tech Lead pointed out isn’t just about AI.

It reflects a deeper shift in the tech world:

We’re moving from “Can we build this?” to “Should we trust this?”

And that’s a much harder question.

Because it doesn’t have a purely technical answer.

Where This Leaves Us

AI in open data isn’t going away. It’s too useful.

But blind adoption isn’t the path forward either.

The future likely looks like this:

- AI as a guide, not a decision-maker

- Interfaces that explain, not just answer

- Systems designed for verification, not just speed

A Final Thought

Trust isn’t built by making AI smarter.

It’s built by making its behavior understandable.

And maybe that’s what “We don’t trust AI” really means:

Not that AI is useless.

But that we haven’t yet designed it in a way that deserves trust.

AI-generated summary. Full credits:

Source: Okfn.org.

Author: Patricio Del Boca.

Date: 2026-04-07T18:02:09Z.

Read more: https://blog.okfn.org/2026/04/07/an-honest-reflection-on-the-integration-of-llms-into-open-data-portals/.

Leave a Reply